For me, training neural networks has always seemed prohibitively expensive. To train one to produce compelling results must take a very long time with very expensive computers, right?

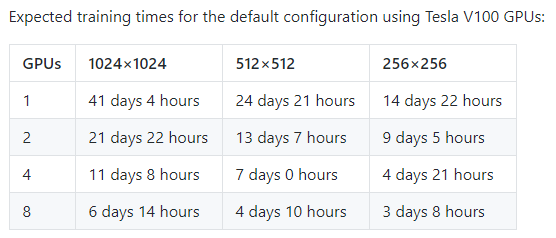

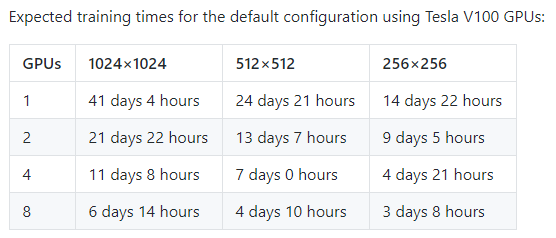

If you take a look at the StyleGAN, you might think that. Like most projects pushing the state of art, Nvidia is using the latest and greatest enterprise GPUs to produce their models, and on high resolution images like their faces dataset, they indicate that it would take 41 days of GPU time to train such a model, on a card that most people have limited access to. Their documentation also mentions needing 12GB of GPU RAM – more than most folks have lying around!

But is that the reality?

...

One of the elements of training neural networks that I’ve never fully understood is transfer learning: the idea of training a model on one problem, but using that knowledge to solve a different but related problem. I’m aware of the general idea – that it should be possible to reuse knowledge from one problem space on a different but related problem space – but the idea that a small amount of training on a small dataset can meaningfully improve the performance of a neural network is still strange to me.

That said, regardless of how strange it seems to me, from my experiments this weekend, it is clearly extremely effective.

...

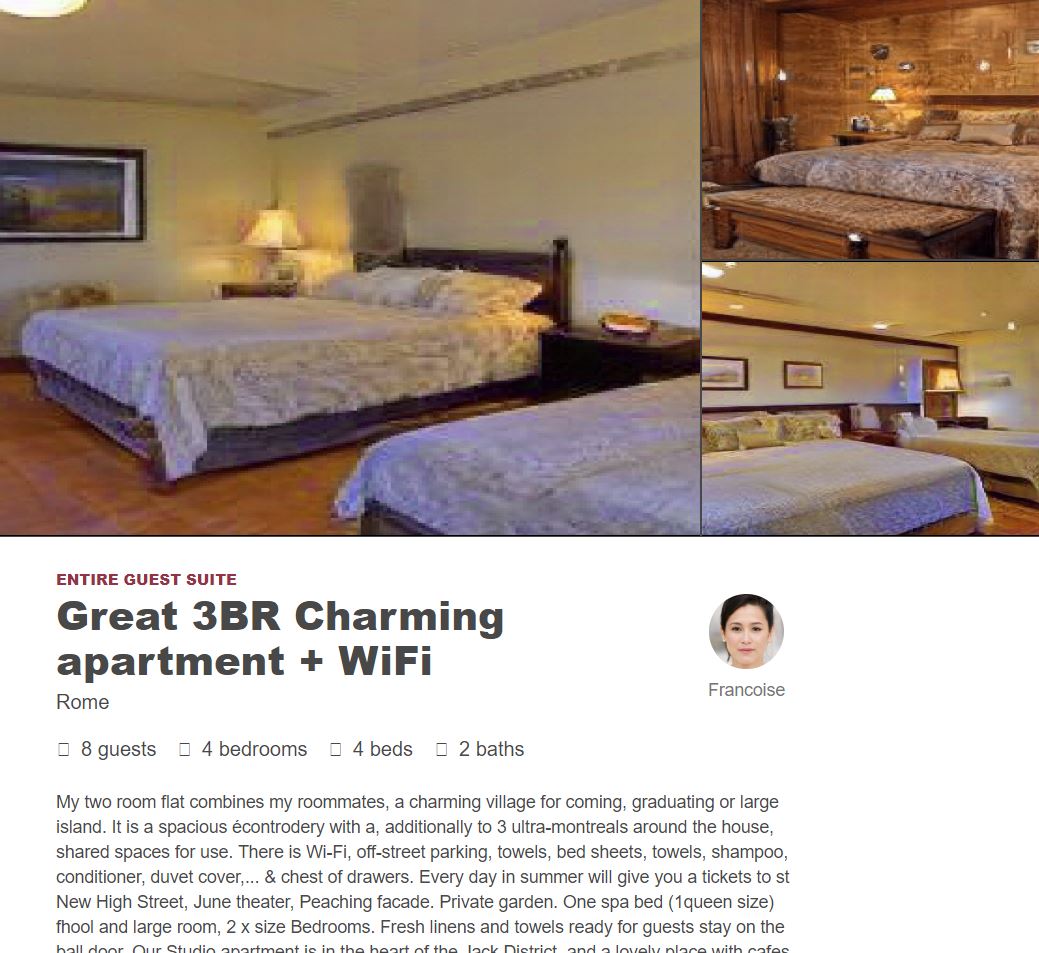

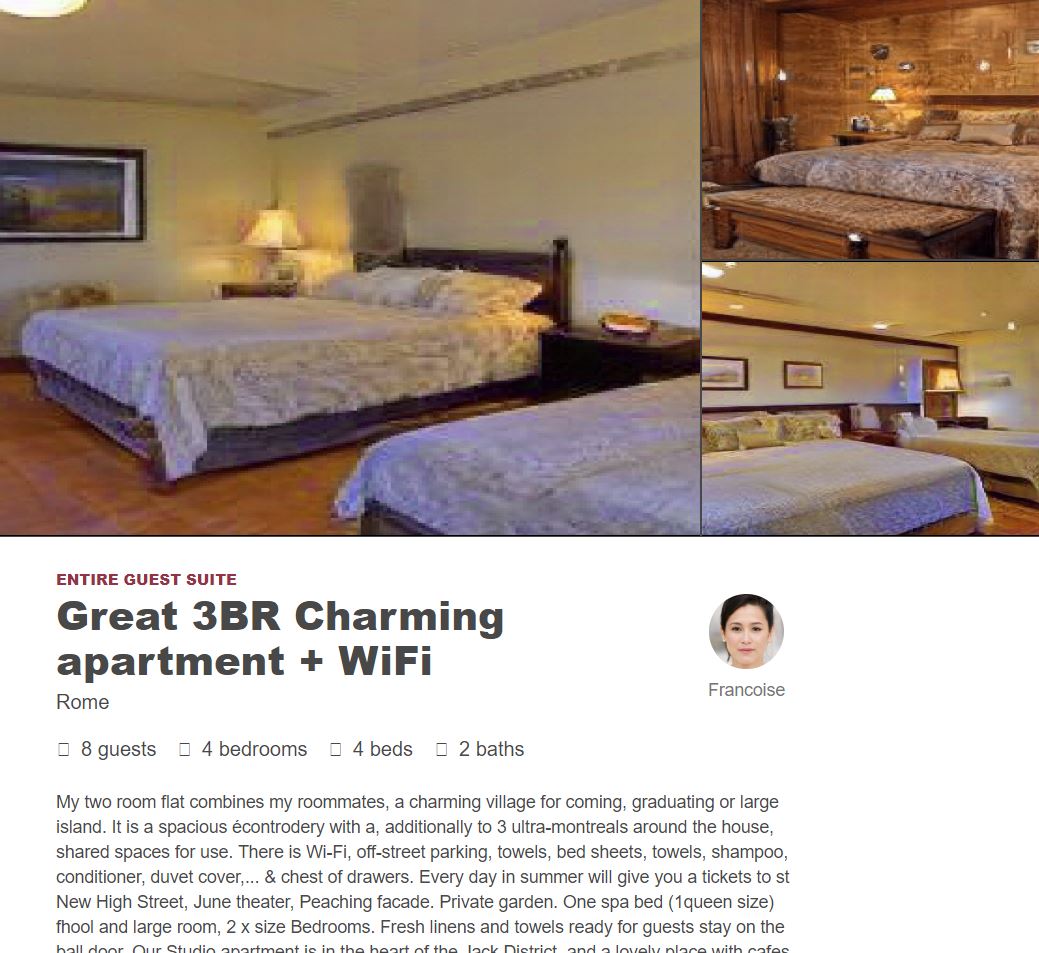

When I started out this weekend, I did not expect that I would be using the fevered dreams of computer-driven neural nets to invent imaginary AirBNB listings, but here we are.

...

One of the most interesting 3D models that I’ve had the opportunity to print is the Little Digger, a piece created by Joe Ethington in 2013. This innovative print is a 7-piece model that prints in place, creating a quick and easy fun toy for people to play with – and in the process, demonstrates some of the ways that you can create interesting or surprising prints.

In an upcoming series of videos, I’ll be breaking down how 3d printers work – specifically, fused deposition modelling 3d printers typically available in maker spaces and in people’s homes....